By Adam Hartley

•

February 18, 2026

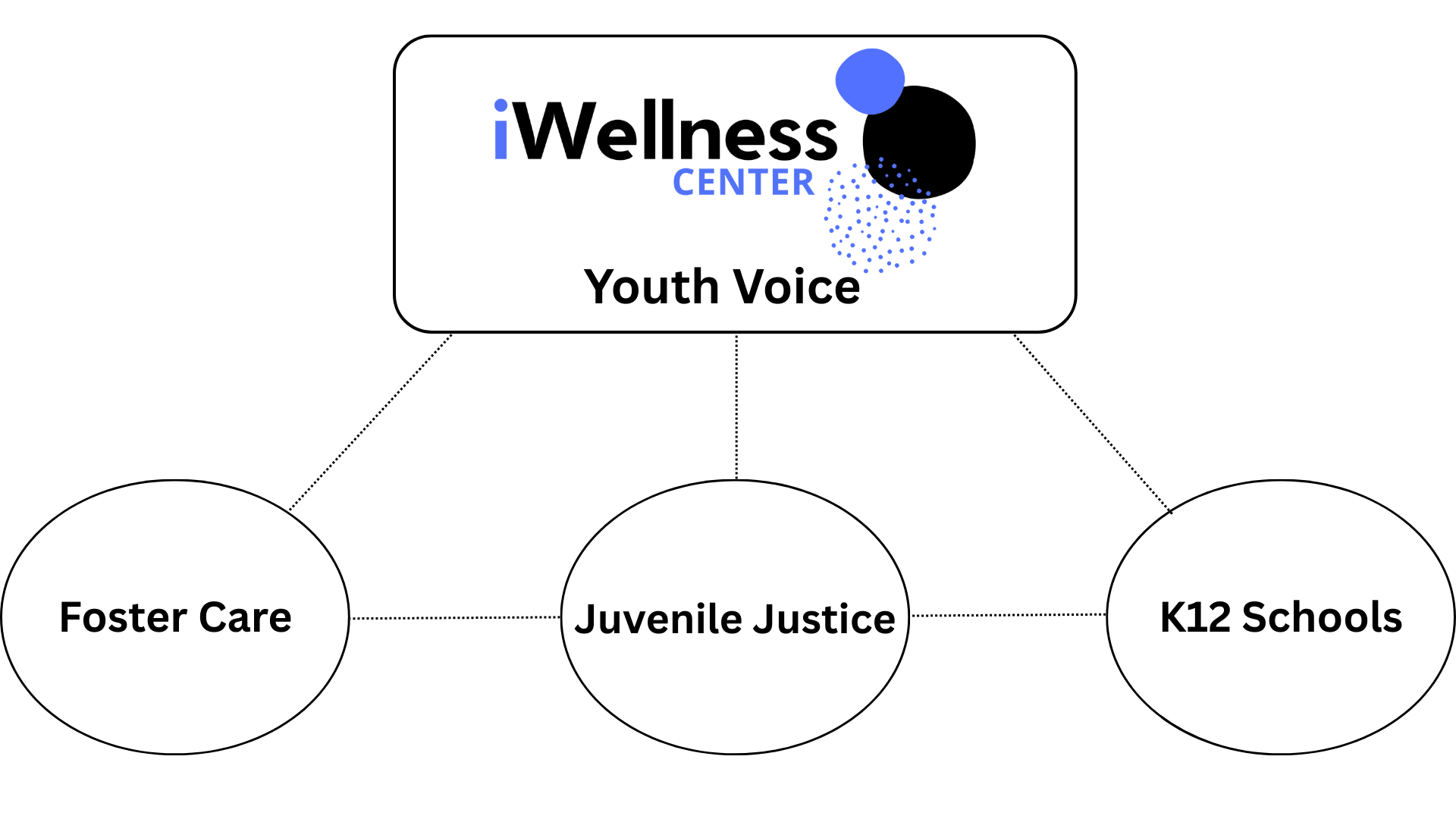

In juvenile justice and foster care systems, we often know a young person is struggling only after a crisis has already occurred. A placement disruption. A disciplinary incident. A mental health emergency. By then, the opportunity for early, targeted support has passed, and the costs, both human and financial, have multiplied. The question is not whether these systems care about the young people they serve; they most certainly do. The question is whether they have the tools to identify needs early enough to make a difference. The Cost of Waiting Research consistently demonstrates the consequences of delayed intervention. In juvenile justice settings, 60-70% of detained adolescents meet criteria for at least one mental health disorder, compared to 20% of the general adolescent population (Duchschere et al., 2023). More concerning, mental health symptoms often worsen during detention, and detention itself is linked to lower educational attainment and increased risk of adult recidivism. In foster care systems, placement instability compounds trauma and delays permanency. Studies show that children experiencing unstable placements face a 36-63% increased risk of behavioral problems compared to those who achieve stable placements, even after accounting for their baseline challenges at entry into care (Rubin et al., 2007). Yet most systems still rely on periodic assessments often conducted only once or twice per year, or worse, only when a crisis triggers a referral. This approach leaves long gaps where disengagement, distress, and unmet needs go unnoticed. The result: systems spend more time and resources managing crises than preventing them. What Early Intervention Actually Means Early intervention doesn't mean predicting the future or diagnosing mental health conditions. It means creating regular opportunities to check in with young people, identify changes in their experience, and respond before small concerns become larger problems. In practice, this requires: Frequent touchpoints: Regular, structured check-ins that capture how a young person is doing right now Consistent measurement: Using the same questions over time to identify trends and changes Accessible data: Real-time information that staff can actually use to inform decisions Clear protocols: Defined processes for responding when concerns are identified Traditional assessment models like annual screenings or crisis-triggered evaluations were not designed for early identification; they were designed to respond to problems that have already surfaced. The Role of Data in Supporting Young People When juvenile justice and foster care systems implement frequent wellness check-ins, they gain visibility into the lived experience of the young people they serve. A youth in a detention facility who reports declining feelings of safety or connection. A child in foster care whose responses suggest growing disengagement from their placement. This isn't about surveillance. It's about creating structured opportunities for young people to share how they're doing, and ensuring that information reaches the adults who can respond. Frequent data collection serves multiple functions: Early warning: Identifies concerning trends before they escalate to crisis Progress monitoring: Tracks whether interventions are working Resource allocation: Helps systems direct support where it's most needed Youth voice: Gives young people a regular, structured way to communicate their experience The key is frequency. Checking in once or twice a year provides a snapshot. Checking in weekly or biweekly provides a timeline. One that reveals patterns, changes, and opportunities for intervention. How iWellness Supports These Systems iWellness was designed as a Tier-1 universal screener. A tool for regular, structured feedback that identifies disengagement, risk, and unmet needs early. While originally developed for K-12 schools, the platform serves juvenile justice facilities, foster care systems, and other public-sector environments facing similar challenges. The platform provides: Frequent check-ins: Weekly or biweekly surveys (up to 40 data points per year vs. industry norm of 1-2) Simple, validated questions: A 7-question check-in using a 4-point scale, designed for clarity and consistency Real-time alerts: Staff are notified when responses indicate concern (e.g., two consecutive high-risk surveys, sudden changes in responses) Customizable dashboards: Role-based access to data at individual, group, and system levels Self-service resources: 24/7 access to categorized activities and exercises for youth iWellness is not a diagnostic tool. It does not replace counselors, clinicians, or case managers. It is a decision-support platform that helps staff identify who needs support, when they need it, and whether interventions are making a difference. What the Data Shows Systems using iWellness report increased engagement with student support services and improved the ability to identify youth needing intervention before crises occur. Year-over-year trends show more positive survey responses and earlier connections to support. This isn't about preventing every crisis or solving every problem. It's about shifting from reactive to proactive, and giving staff the information they need to intervene earlier, target resources more effectively, and track progress over time. Implementation Considerations Implementing a wellness monitoring platform requires planning: Staff training: Counselors, case managers, and support staff need clear guidance on interpreting data and responding to alerts Integration with existing systems: Data should inform case planning, treatment decisions, and resource allocation Privacy and compliance: All data handling must meet FERPA, HIPAA, and other relevant regulations (iWellness is fully compliant) Youth engagement: Check-ins work best when young people understand their purpose and see that their input leads to support Most systems complete onboarding, including training, rostering, and first survey deployment, within about one month. Moving Forward Juvenile justice and foster care systems face complex challenges with limited resources. Early intervention won't solve every problem, but it can help systems use their resources more effectively. Identifying needs sooner, targeting support more precisely, and tracking outcomes more consistently. The question is whether your system has the tools to identify needs early enough to act on them. If you're interested in learning how frequent wellness check-ins could support your system's goals, we'd welcome a conversation about your specific context and needs. References Duchschere, J. E., Reznik, S. J., Shanholtz, C. E., O'Hara, K. L., Gerson, N., Beck, C. J., & Lawrence, E. (2023). Addressing a mental health intervention gap in juvenile detention: A pilot study. Evidence Based Practice in Child and Adolescent Mental Health, 8(1), 46-68. Rubin, D. M., O'Reilly, A., Luan, X., & Localio, A. R. (2007). The impact of placement stability on behavioral well-being for children in foster care. Pediatrics, 119(2), 336-344.